To hack a Large Language Model: Speak its style, but not its language.

On building better RAG systems, attacks in plain sight, and a secret Croatian translation project that practically predicted the tech we're using today.

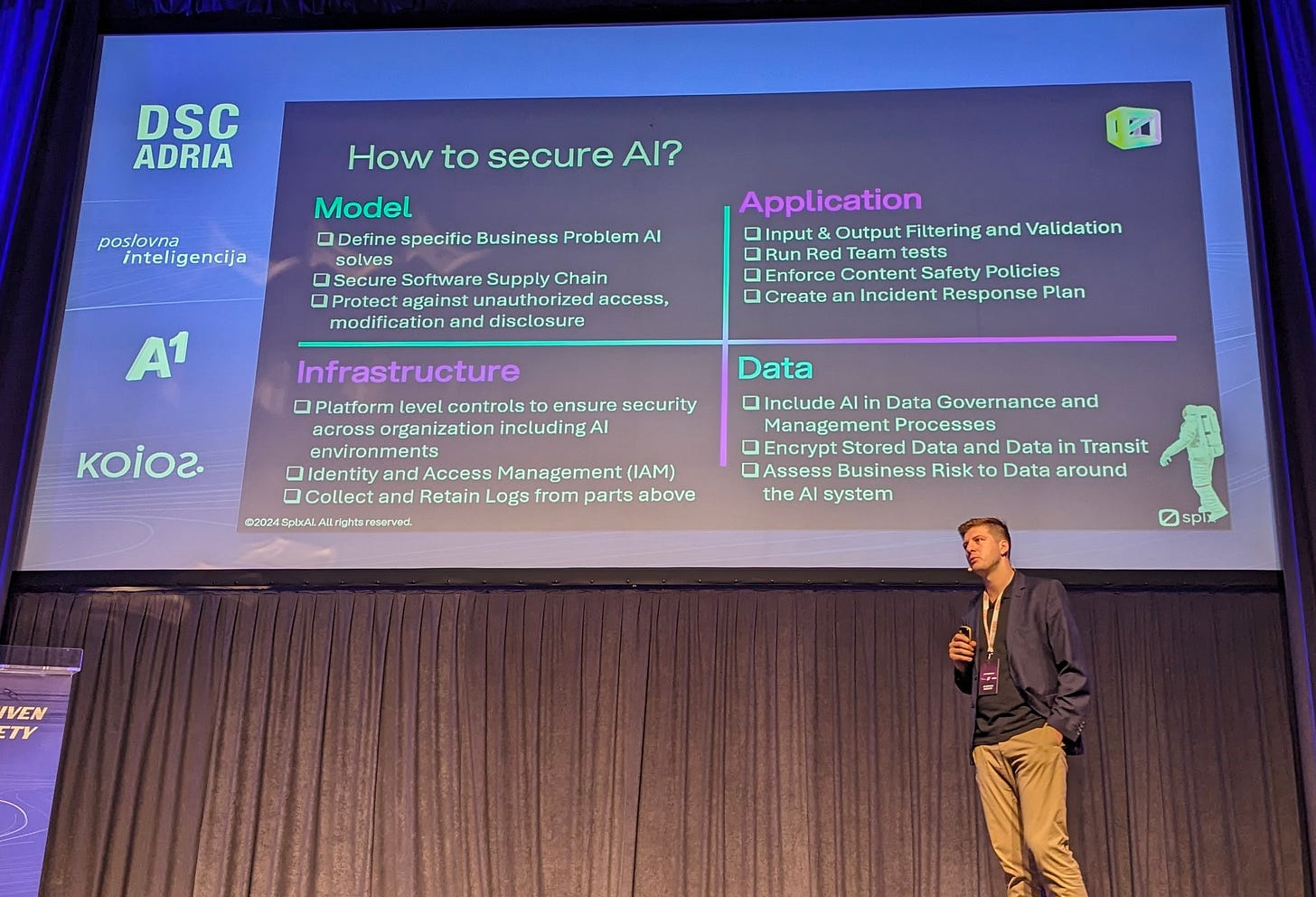

Today’s post continues my key takeaways from DSC Adria in Zagreb, Croatia. While last time I focussed on high-level data and AI topics like strategy, trends and future predictions in the (Generative) AI space, this time I’m getting into the details: look out for pr…